It’s insane to think that there are still Marxists out there, most of whom live comfortable lives in wealthy capitalist countries. Back in 2019, my colleague (and new friend!) Mike Huemer wrote (my emphasis):

“I’ve been known to cite Marxism as an example of an irrational political belief. This is controversial in intellectual circles (indeed, some will probably be outraged by this post), but that doesn’t prevent it from being clearly true; it just means that certain forms of irrationality are popular in intellectual circles. In fact, I regard Marxism as the paradigm of an irrational political belief; if it’s not irrational, nothing is. The theory has been as soundly refuted as a social theory can be. Sometimes, people ask me to explain why I say this.

“Let me start with why I say it’s been soundly refuted.

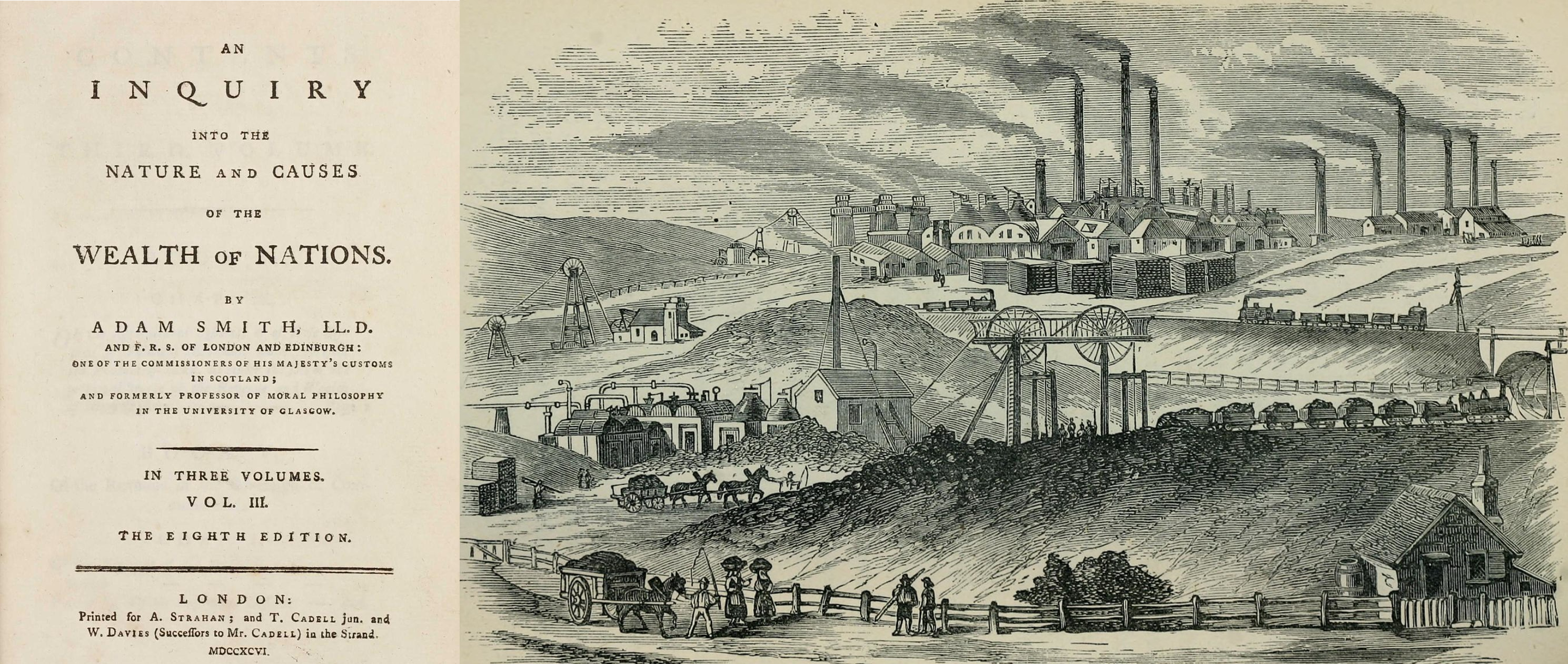

“a. Theoretical developments: Shortly after Marx wrote, his underlying economic theory was rejected by essentially the entire field and superseded by a better theory. Virtually no one who studies the subject (outside of oppressive Marxist regimes) believes the labor theory of value anymore. Without the labor theory of value, there’s no theory of surplus value, no theory of exploitation, and thus the central critique of capitalism fails. If you don’t know what I’m talking about, read any standard text on price theory. If you learn modern price theory, you are going to agree with it, and you are going to reject the labor theory as well. It’s that clear.

“b. Historical developments: Marxism was tried many times. It was tried in many countries with different cultures, on every continent except Australia and Antarctica.

“By different people, with different variations on the theory, at different times. Every time it went horribly wrong. Not just once or twice, and not just slightly wrong. In the best cases, it resulted in severe poverty and abuse of power. In the worst, it resulted in the greatest human atrocities in history. In total, between 100 and 150 million people were killed by their own, Marxist governments in the twentieth century. To be a Marxist, as far as I understand what that means, is to believe that, knowing all this, we should try again.

“c. Predictability: In case you are tempted to say that Marx couldn’t have anticipated this: yes, he could. It’s hardly difficult to figure out that giving total power to the state might cause some problems — it’s not as if the history of government had been completely clean up til the 20th century, when suddenly, for the first time in history, people with power started to abuse it. Nor is this just some right-wing ideological point.”

Continue reading →