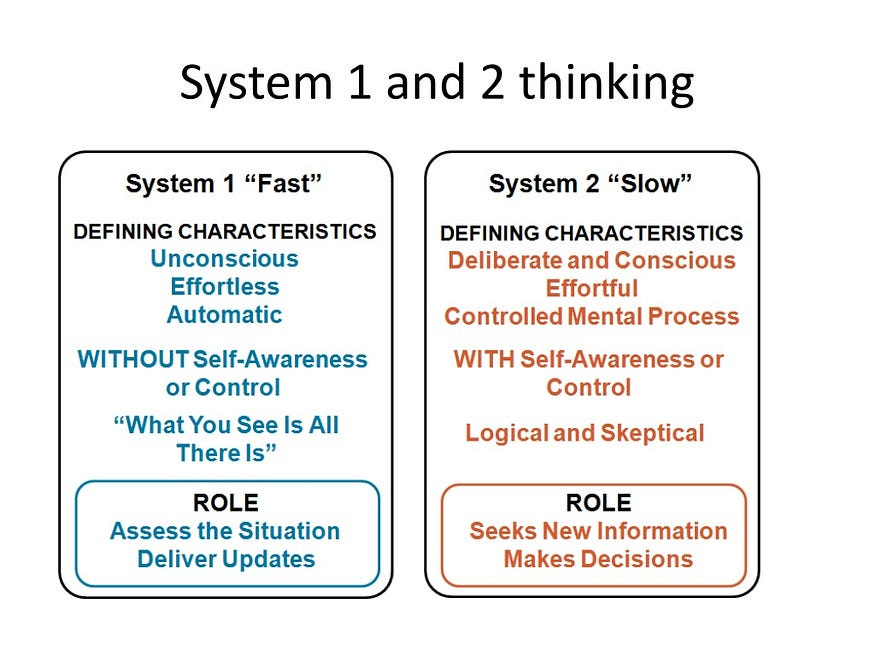

I have spent a lot of time this month reading and thinking about the following question: What impact overall will A.I. have on our critical thinking skills? In plain English, will A.I. makes us smarter and better informed citizens, or will A.I. make us dumber or more mentally lazy? Today, I want to conclude my series of blog posts on this big question with a procedural point. Specifically, who should have the burden of proof in this debate, the proponents of A.I. or the opponents? And secondly, how high should their burden be? Proof beyond a reasonable doubt? Clear and convincing evidence? Preponderance of the evidence? Probable cause? Or something else?

In summary, the burden of proof is a key feature of legal trials, for in order to secure a conviction in a criminal case or an award of money damages in a civil case, the moving party must produce sufficient evidence or proof that his allegations are true. (In the Anglo-American legal tradition, the burden is “proof beyond a reasonable doubt” in criminal cases, while the “preponderance of the evidence” standard is used in most civil cases.) This concept is also relevant to many areas of life beyond law, including the ongoing debates about A.I.

For my part, I agree with Tyler Cowen that the burden of proof should be on the opponents of A.I. (Professor Cowen, a proponent of A.I., had replied to one of my previous posts by email that the “Burden of proof [is] not on me!”) Why? Because we don’t want to hamper innovation and technical progress unless and until we have sufficient evidence that the harms of X innovation outweigh its benefits. In addition, the burden of proof should not only be on the opponents of innovation; I would further add that their burden should be a high one: “clear and convincing” proof.