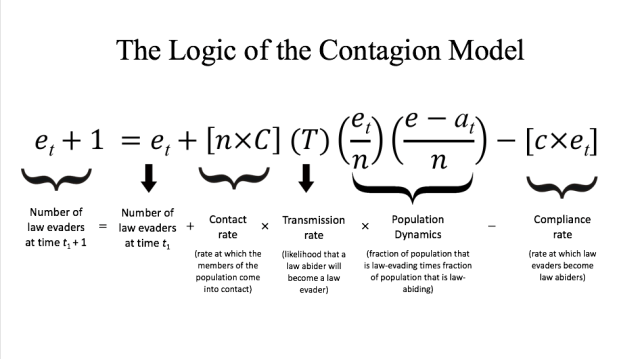

In our previous post, we proposed the possibility of a “contagion model” of legal evasion, noting that such a model is plausible given that people tend to copy or imitate what other people are doing. Here, we present the details of our model. We start with a finite population of actors consisting of some number of law evaders and of law abiders as follows:

- Let n be number of people in a given population.

- Let et be the number of law evaders in population n at time t.

- Let n – et be the number of law abiders at time t.

- Let C (big C) be the level of contact between people in the population.

- Let Τ be the contagion or transmission rate; i.e. the likelihood that a law abider will become a law evader.

- And let c (little c) be the natural or constant level of compliance in the population.

As a result, c × et is the compliance rate; i.e. the rate at which law evaders become law abiders, and n × C is the contact rate; i.e. the rate at which the members of a population meet or come into contact with each other. (Note: the variable big C is what distinguishes our contagion model from information cascade models, which assume that the public behavior and decisions of all actors are common knowledge. In our contagion model, by contrast, each actor meets (and thus observes) a limited number of fellow actors, depending on the value taken by big C.)

Given these variables and parameters, we now present the logic of our contagion model as follows:

Thankfully, our contagion equation above can be simplified through algebraic manipulation as follows:

Thankfully, our contagion equation above can be simplified through algebraic manipulation as follows:

et + 1 = et + et × {C × T × [(n – et)/n] – c}

Notice that as the variable et approaches zero, the (n – et)/n term in the equation above approaches 1, so the model can be further simplified as follows:

et + 1 = et + et × [C × T – c]

As a result, our model tells us that law-evading behavior will spread when C times T > c. In words: when the contact rate times the transmission rate is greater than the rate of compliance, law-evading behavior will spread through the population because the number of law abiders changing their behavior as they come into contact with law evaders is greater than the natural rate of compliance in the population.

But what are the values for C, T, and c? Broadly speaking, we would expect these parameters to vary depending on the actual population of actors we are modelling. By way of example, we would expect big C, the level of contact, to be high in a mobile and modern population and low in a rural or pre-modern population. Moreover, we would expect T, the transmission rate, to vary depending on the ratio of law abiders to law evaders in the population, and we would expect little c, the level of compliance in a population, to depend (endogenously) on the general level of trust in a given population and (exogenously) on the levels of detection uncertainty as well as legal uncertainty. In our next post, we will consider the interaction between both types of uncertainty: detection uncertainty and legal uncertainty.