The map pictured below visualizes the average daily totals of people entering the USA from Mexico by car, bus, or on foot at various ports of entry along the U.S.-Mexico border.

Credit: The Los Angeles Times

The map pictured below visualizes the average daily totals of people entering the USA from Mexico by car, bus, or on foot at various ports of entry along the U.S.-Mexico border.

Credit: The Los Angeles Times

Hat tip: @pickover

We cracked open this mammoth tome in March and are now on page 430, having just finished reading David Foster Wallace’s allegory about the allocation of scarce resources, which also happens to be one of the most fundamental questions in a wide variety of scholarly disciplines, including economics, political philosophy, and law. DFW’s haunting allegory is set on a ledge in the Sonora Desert, where a pair of the most memorable characters in North American literature, Hugh (Helen) Steeply and Remy Marathe, discuss a hypothetical scenario involving a single-serving portion of pea soup. (My colleague Linda Essig provides a good summary of the substance of Steeply and Marathe’s ruminations here.) We shall press on and provide additional updates next month.

Source: Jon Beasley-Murray

Thus far, we have identified several common forms of “data fraud,” including cherry picking, data dredging, and the false cause fallacy. Yet all of these myriad forms of data fraud might be mere symptoms of a larger problem: publication bias. Just as TV and print media compete to report on the most salient or salacious events that will grab their viewers’ or readers’ attention (“If it bleeds, it leads”), scientific journals likewise compete to publish studies with the most exciting, novel, or “sexy” findings. But the problem with this fetish for novelty or salience is that it generates a scholarly market failure, one resulting in the overproduction of sexy studies, or in the words of the good folks at Geckoboard (a UK-based consulting firm), “For every study that shows statistically significant results, there may have been many similar tests that were inconclusive…. Not knowing how many ‘boring’ studies were filed away impacts our ability to judge the validity of the results we read about. When a company claims a certain activity had a major positive impact on growth, other companies may have tried the same thing without success, so they don’t talk about it.” That is why both the news media and the most prestigious scholarly journals often end up presenting such a distorted picture of reality.

Credit: Franco, et al.

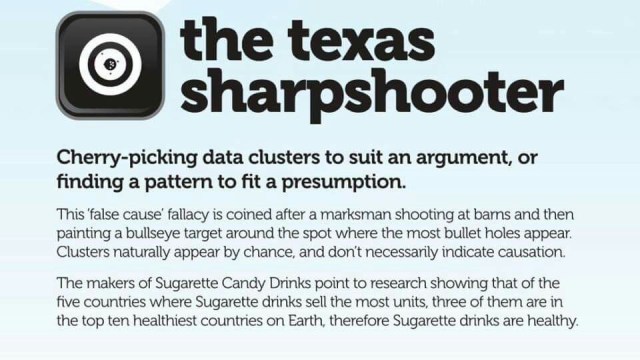

Thus far we have seen the related statistical sins of cherry picking and data dredging. Today, let’s talk about the false cause fallacy (or “false causality” for short), which occurs when you observe two events that appear together and then leap to the conclusion that one event must have caused the other. (Here is a mundane example. The video below presents many more.) In reality, just because two events occur together does not mean that one caused the other. The causation may run in the opposite direction or some unobserved third factor might be the underlying cause of both events or there might be no direct or indirect causation at all!

Let’s proceed with our parade of fraudulent data practices, shall we? Next up is data dredging (a/k/a “p-hacking”), a more sophisticated (and less transparent) form of cherry picking. In the words of Wikipedia: “The process of data dredging involves automatically testing huge numbers of hypotheses about a single data set by exhaustively searching … for combinations of variables that might show a correlation ….” This form of data fraud thus occurs when researchers perform multiple statistical tests on a single set of data and then selectively publish only those results that satisfy some test of statistical significance. Such ex post results, however, are often just spurious correlations. The lesson here is this: beware of so-called “statistically significant” results. To avoid perpetrating this form of data fraud (and reduce positive-results bias to boot), some journals and funding organizations are now requiring researchers to preregister their clinical trials, stating in advance what hypotheses they are going to be testing.

We presented a collection of fraudulent data practices in our previous post. Now, let’s consider each fraudulent technique in turn, beginning with the “Texas sharpshooter fallacy” or cherry picking: the practice of selecting results that fit your claim and excluding those that don’t. According to the good folks at Geckoboard (a London-based consulting firm), this practice is “[t]he worst and most harmful example of being dishonest with data. When making a case, …. people often only highlight data that backs their case, rather than the entire body of results. It’s prevalent in public debate and politics, where two sides can both present data that backs their position. Cherry picking can be deliberate or accidental. Commonly, when you’re receiving data second hand, there’s an opportunity for someone choosing what data to share to distort the truth to whatever opinion they’re peddling. When on the receiving end of data, it’s important to ask yourself: ‘What am I not being told?’”

Welcome to the online home of the IASS

Hopefully It’s Interesting.

In Conversation with Legal and Moral Philosophers

Relitigating Our Favorite Disputes

PhD, Jagiellonian University

Inquiry and opinion

Life is all about being curious, asking questions, and discovering your passion. And it can be fun!

Books, papers, and other jurisprudential things

Ramblings of a retiree in France

BY GRACE THROUGH FAITH

Natalia's space

hoping we know we're living the dream

Lover of math. Bad at drawing.

We hike, bike, and discover Central Florida and beyond

Making it big in business after age 40

Reasoning about reasoning, mathematically.

I don't mean to sound critical, but I am; so that's how it comes across

remember the good old days...

"Let me live, love and say it well in good sentences." - Sylvia Plath

a personal view of the theory of computation

Submitted For Your Perusal is a weblog wherein Matt Thomas shares and writes about things he thinks are interesting.

Logic at Columbia University

Just like the Thesis Whisperer - but with more money

the sky is no longer the limit

Technology, Culture, and Ethics

Just like the horse whisperer - but with more pages

Poetry, Other Words, and Cats