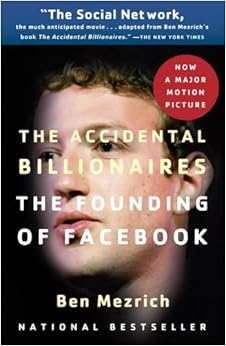

This fall, we are teaching a large undergraduate survey course (n > 800) on “the legal and ethical environment of business.” Instead of trying to cover everything, we will focus instead on the founding and subsequent explosive growth of Facebook–as depicted in the bestseller “The Accidental Billionaires” by Ben Mezrich (pictured below) and the movie “The Social Network”–in order to explore various areas of the legal and ethical environments of business, including such areas as the law of contracts (think of Facebook’s “terms of use”), intellectual property (think of Facebook’s logos, brand, and “Like” symbols), choice of business entity (think of Facebook’s evolution from a two-man partnership into a Florida limited liability company before incorporating in the State of Delaware), and many other relevant legal and ethical topics, such as the ethics of Facebook’s privacy policies. Although the Professor is not a big fan of Facebook, we think our focus on the founding of Facebook makes good sense for several reasons. First of all, our target audience consists of undergraduates, most of whom use some form of social media to connect with the wider world, and furthermore, it was a motley crew of college students who ended up creating one of the most successful Internet platforms in the world today. Mark Zuckerberg literally changed the world, so why not learn from his successes … and from some of his mistakes?

The Simple Sabotage Field Manual

Via kottke, we found this 20-page government-issued, World War II era guidebook called the Simple Sabotage Field Manual. University administrators and business managers take note, here is tip #3:

Organizations and Conferences: When possible, refer all matters to committees, for “further study and consideration.” Attempt to make the committees as large and bureaucratic as possible. Hold conferences when there is more critical work to be done.

Use at your own risk

Ethical machines (part 3 of 3)

In our previous posts, we presented Brett Frischmann’s novel idea of a Reverse Turing Test, i.e. the idea of testing the ability of humans to think like a machine or a computer. But, how would we create such a test? For his part, Frischmann proposes four criteria (pictured below, via John Danaher) for creating a Reverse Turing Test. Here, we consider Frischmann’s fourth factor: rationality. ((His first two criteria–mathematical computation and random number generation–do not appear to carry any moral significance, while his third criterion–common sense or folk wisdom–seems better suited for Alan Turing’s original test rather than a reverse one.))

By rationality, Frischmann means instrumental or ends-means rationality. Consider the rational actor/utility-maximization model in economics (homo economicus) or the assumption of hyper-rationality in traditional (i.e. non-evolutionary) game theory: “I know that you know that I know …” Many human decisions, however, are often emotive or irrational in nature, such as falling in love, overeating, suicide, etc. Given this disparity between machine-like rationality and human-like emotions, we should in principle be able to create a Reverse Turing Test to measure how rational or machine-like a person is. The more instrumental and less emotional a person is, the closer he or she would be to passing Frischmann’s hypothetical Reverse Turing Test.

Does the rationality component of the Reverse Turing Test have any ethical implications? John Danaher thinks so: “This Reverse Turing Test has some ethical and political significance. The biases and heuristics that define human reasoning are often essential to what we deem morally and socially acceptable conduct. Resolute utility maximisers few friends in the world of ethical theory. Thus, to say that a human is too machine-like in their rationality might be to pass ethical judgment on their character and behavior.” (See his 21 July blog post.) We, however, are not so sure what the ethical implications of Frischmann’s rationality criterion are. John Rawls’s famous “original position” thought-experiment, for example, is premised on the rational actor model, and theories of consequentialism (such as rule-utilitarianism) form a major tributary in the infinite river of moral philosophy. In other words, to the extent machines are far less emotional and more instrumentally rational than humans, might machines potentially have a greater ethical capacity than humans?

Credit: John Danaher

Thinking like a machine (part 2 of 3)

In our previous post, we mentioned John Danaher’s excellent review of Brett Frischmann’s 2014 paper exploring the possibility of a Reverse Turing Test. One of the insightful contributions Frischmann makes to this voluminous literature is his idea of a Turing Line, or the fuzzy line that separates humans from machines. According to Frischmann, this line serves two essential functions: (1) it differentiates humans from machines (and machines from humans, we would add), and (2) it demarcates a “finish line” or goal. In other words, for a machine to pass Turing’s original test, it must be able to cross this imaginary line by deceiving us that it is human. Most of the literature in this area focuses on the human side of the line: will a machine ever be capable of crossing this boundary? Frischmann, however, focuses on the machine side of the line. (In the words of Danaher: “Instead of thinking about the properties or attributes that are distinctively human, [Frischmann is] thinking about the properties and attributes that are distinctly machine-like.”) In particular, Frischmann poses a different and far more original question: will a human ever be able to deceive another person (or another machine) that he or she is a machine? But what does it mean to “think like a machine”? We shall discuss that difficult question in our next post …

Credit: Brett Frischmann (via John Danaher)

Reverse Turing Tests and Ethical Machines (part 1 of 3)

Our colleague John Danaher recently pondered the possibility of a “Reverse Turing Test” in this intriguing blog post dated 21 July 2016. That is, instead of testing for a machine’s ability to think like a human, what if we tested for a human’s ability to think like a machine? (This theoretical paper by law professor Brett Frischmann on “Human-Focused Turing Tests” is what led Danaher to pose this novel question.) Moreover, according to Danaher (and to Frischmann), the ability to think like a machine may have some serious ethical implications. For our part, we have often wondered whether ethical rules like Kant’s famous “Categorical Imperative” or the Golden Rule could be reduced to a simple computer program, and we have long been fascinated by the Turing Test; by way of example, we used the original version of Alan Turing’s famous test to develop the notion of “probabilistic verdicts” in this paper. Accordingly, we will be blogging about the ideas in Danaher’s post and in Frischmann’s paper over the next few days.

Kant vs. Strauss vs. postmodernism

If you had to choose, would you rather read 300 pages on Kantian nonsense, on Straussian esotericism, or on postmodernist garbage? Our colleague Jason Brennan, a philosophy professor at Georgetown University, wrote up this sarcastic taxonomy of the most common types of PhD dissertations in the fields of political philosophy and political theory, a comprehensive classification based on his personal experience of having served on many search committees for post-docs and junior candidates. (Hat tip: Brian Leiter.) The even-numbered abstracts were our personal favorites:

4. Incomprehensible Kantian Nonsense. “I’m going to argue that some policy P is justified on Kantian grounds. This argument will take 75 steps, and will read as if it’s been translated, or, rather, partially translated, from 19th century German. It will also be completely implausible, and so, to non-Kantians, will simply read like a reductio of Kant rather than a defense of P.”

6. Incomprehensible Postmodernist Garbage: “This dissertation examines the ontic-ontological ontology of late capitalist crises through the agonistic hyperrealist lens of soda dispensers and Fall Out Boy lyrics.”

8. Straussian Esotericism: “Here are three hundred pages written about the first two pages of Locke’s third letter to his second foot doctor. My dissertation does not defend any recognizable thesis, nor is it a piece of exegesis. Non-Straussians will have no clue what I’m doing. However, other Straussians will recognize it as deep.”

Bangladesh > Russia (population)

Hat tip: Cliff Pickover

Additional critique of Baude and Sachs

We mentioned previously that our colleagues Will Baude (University of Chicago) and Stephen Sachs (Duke University) posted to SSRN a fascinating paper titled “The Law of Interpretation” to be published in the Harvard Law Review early next year. In their paper, Baude and Sachs reframe the traditional rules of statutory and constitutional interpretation as a separate body of law. In the words of Professor Baude: “the law of interpretation can tell [judges] which of several contested theories to use when reading a text, what texts to interpret, what kind of background presumptions to use, and how to resolve uncertainties in those readings. Our model here is private law, where legal rules of interpretation are common and relatively uncontroversial …” In other words, instead of proposing a new method or micro-level theory of interpretation, Baude and Sachs claim that the existing rules of interpretation form a coherent body of binding law and that this “law of interpretation” is just as binding on judges as statutes and constitutions are. This is thus an ingenious macro-level theory, since the rules of legal interpretation already exist and since the analogy to private law (e.g., contracts, torts, etc.) will be familiar to all lawyers and judges.

Nevertheless, although we applaud Baude and Sachs for proposing a new way of looking at the problem of interpretation in law, there is an additional reason why we are skeptical about their macro-level theory. Simply put, even if there were a coherent and internally-consistent body of interpretative rules, and even if this body of rules were considered law, these rules are not really binding on judges in any meaningful sense. Why not? Because in addition to the potential problem of regress (which we discuss elsewhere), there is the problem of self-reference: judges are not only the ones who created the rules of interpretation; judges are also the same institutional actors who get to apply these rules and determine their meaning and application. Is there a way out of this circle?